In the first post in our 'Discover Stats' Blog Series we explain what stats is, what it isn't and why you should take the time to learn the basics...

No, wait, stop running - it's really not that difficult. Honest!

Come back and let me explain...

More...

Disclosure: This post contains affiliate links. This means that if you click one of the links and make a purchase we may receive a small commission at no extra cost to you. As an Amazon Associate we may earn an affiliate commission for purchases you make when using the links in this page.

You can find further details in our TCs

What Statistics Isn't

Statistics isn't some mystical black art.

You don't need runes, capes, daggers or to sacrifice a virgin at the full moon. Well, not unless you really want to.

They're optional extras...

It's actually quite straight-forward.

Well, the beginner statistics is easy, and although it will get more complicated as you go a bit deeper, by the time you've mastered the basics you'll have more experience so you'll be able to handle it.

Basically, statistics is about making good choices. When you're doing a particular analysis there are usually a whole bunch of different ways you can do it, and it's not often the case that there is only one right way and all other ways are wrong.

There is usually a spectrum of possible approaches and some are more appropriate than others.

Just get 2 statisticians together and ask them to discuss your research project - I guarantee they'll agree about the broad strokes and disagree about most of the details...

Your job as the data analyst is to arm yourself with enough knowledge to consistently make good choices about how to deal with data.

Unless you're a top statistician you don't need to make the best choice every time.

I'll let you into a secret. Most statistical measures are, to use a surgical metaphor, more like using a cleaver than a laser scalpel. They're really not that precise, so stop worrying about it and let's dive in and find out about the easy stuff.

I promise it'll be quite painless...

Statistics is not a Dark Art - it's just much misunderstood...

OK, So What Is Statistics?

Statistics is the study of the analysis, interpretation and presentation of data, and in particular of measuring and controlling uncertainty.

It is the 'uncertainty' that makes statistics a distinct flavour of mathematics, and is the reason that statisticians argue a lot.

What Is Statistics Good For?

We usually collect data to answer interesting and important questions, like

- By how much did my daughter's class grow since last year?

- Is smoking related to Lung Cancer?

- Will it rain more in Denmark next year than it did last year?

There are really one 2 uses of statistics, to describe or predict, and we call these:

- Descriptive Statistics

- Inferential Statistics

While we would like to collect data from the entire population, such as

- all patients with breast cancer

- everyone that bought an iPad

- the height of everyone in Finland

It is not often that we have the resources to do so, so instead we collect a sample of the population. We then use descriptive statistics to describe the features of our sample population and use inferential statistics to infer the features of the whole population.

And here is where 'uncertainty' comes in. Selecting samples from the population involves a certain amount of randomness, so our statistical measures come with an amount of uncertainty.

If we have designed our data collection procedures correctly the uncertainty in the data will be small and we will have a high degree of confidence in the answers to our questions.

On the other hand, poor experimental design will give us a large uncertainty in the data and the answers to our questions won't be worth the paper they're written on.

They might as well as be printed on soft quilted 3 ply paper...

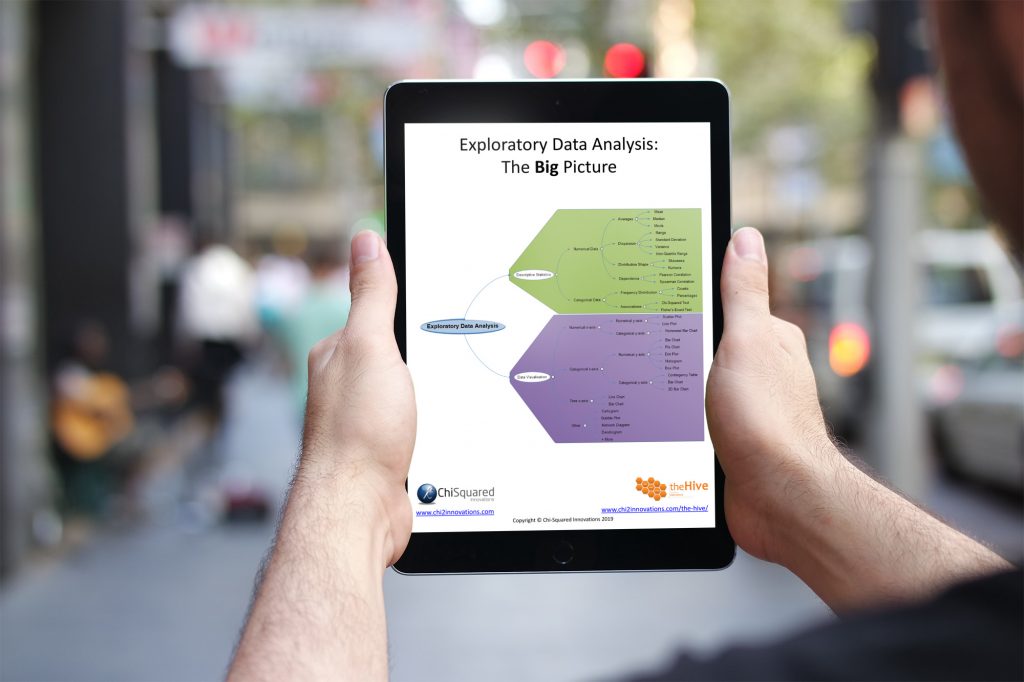

Descriptive Statistics

This branch of statistics is used to summarise your data, and typically includes things like:

- Averages (mean, median, mode)

- Variation (standard deviation, confidence intervals)

- Frequency and Percentages

It doesn't make much sense to publish averages without some measure of variation - the variation gives you an idea of the amount of uncertainty in the data.

If you were to see the following 2 measurements, which one would you have the highest confidence in?

- 25 ± 19

- 25 ± 3

Both measures are identical, but the uncertainty in the 1st is considerably higher than in the 2nd.

Similarly, if you're a basketball manager and your chief scout reports back that both prospect 1 and prospect 2 each made 5 baskets in their last match, which one would you be more interested in signing? On the surface they seem to be matched, but if the scout also reported that prospect 2 had 3 times as many shots as prospect 1 and that prospect 1 had only had 5 shots at the basket, that would change everything, wouldn't it? 100% success rate vs 33% success rate.

So if you want to keep your statistician happy, always present him/her with measures of variability in your summary statistics.

It also helps to give alcohol!

How to Lie With Numbers, Stats and Graphs

A Box Set Containing Truth, Lies & Statistics and Graphs Don't Lie

Truth, Lies & Statistics and Graphs Don't Lie are two of our biggest selling books – and by far our funniest!

In these eye-opening books, award-winning statistician and author Lee Baker uncovers the key tricks of the trade used by politicians, corporations and other statistical conmen to deceive, hoodwink and otherwise dupe the unwary.

Discover the exciting world of lying with data, statistics and graphs. Get this book, TODAY!

Inferential Statistics

As the name suggests, we use these measures when we want to use patterns in the sample data to infer or predict the answers to our questions about the whole population, taking account of the randomness and uncertainty about the sampling process.

Some of the most common inferential statistical tests are:

- Associations (correlation tests)

- Relationships (regression analyses)

As above, all results should be accompanied with some measure of variation, perhaps confidence intervals, so that the size of the effect that you are investigating and the uncertainty surrounding it are reported.

Experimental Design

I wish I had a large bag of cash for every time a scientist brought me the results of an important experiment and asked me to analyse the data only to find a fatal flaw in the experimental design that rendered the results useless. I would have, errr, a lot of bags of cash right now.

Actually, no I wouldn't, because I would have happily returned the large bag of cash so they could find another statistician to miraculously 'rescue' their experiment...

Here's an example of a flawed experiment. See if you can spot the fatal error:

A psychologist conducted an experiment to determine whether music had an impact on problem solving. His design was to get his subjects to solve puzzles under the following conditions (in this order):

- In silence

- Listening to classical music

- Listening to jazz

He measured how long it took to complete each of the tasks and summarised the results.

Spotted the flaw yet? Well, the results were as follows:

- The 1st puzzle took the longest

- The 2nd puzzle was completed in a shorter time

- The 3rd puzzle was completed quickest

Conclusion? Music had a positive effect on the ability to solve problems, and people were better at solving problems when listening to jazz.

Absolute rubbish! The flaw was that the experiment captured 2 different effects and there was no way to distinguish between them. The music may have had an impact on problem solving, but the results were contaminated because the subjects were becoming more adept at solving the puzzles.

How would you change the experiment to eliminate the 'learning effect'?

(Hint: the answer rhymes with 'shmandomise'...).

VIDEO COURSE

Statistics:

The Big Picture

Free to try - no need to buy or register!

The Take-Home Message

Well, I hope that by now you've good a reasonable idea of what statistics is and what it can be used for.

In short, statistics is:

- The study of the uncertainty of data

- Used to describe and summarise features and uncertainty about your data

- Used to predict features about larger populations of data, given the uncertainty in your sample population

- Used to answer many every-day questions about science, politics, sport and business - but only if you design your experiments correctly and you collect your data carefully

Oh, and don't forget that when you take your data to a statistician, go armed with a smile, measures of variability and a large bottle of his/her favourite alcoholic beverage.

I guarantee you the whole process will be that much easier...