Disclosure: this blog post may contain affiliate links. As an Amazon Associate we earn from qualifying purchases.

Here's a joke about how to clean data: Did you know that Data Scientists spend 80% of their time cleaning data and the other 20% complaining about it?

Yeah, you're right - it's not funny. But then there isn't anything funny about data cleaning.

It's not just the Data Scientists that complain about it though, it's pretty much everybody that works with data.

It doesn't have to be so painful though. Learn a few data cleaning tips, tricks and techniques and it can go pretty smoothly and it'll all be over quite quickly.

Data cleaning is all about getting organised, and this blog post will introduce you to the 5 data cleaning steps that will help you put the basics in place. Once you've got these, the rest will follow.

More...

Pin it for later

Need to save this for later?

Pin it to your favourite board and you can get back to it when you're ready.

Let’s face it – cleaning data is a waste of time.

If only the data had been collected and entered carefully in the first place, you wouldn’t be faced with days of data cleaning to do. Worse still, your boss probably doesn’t understand why you can’t just do it in a few minutes. After all, you only need to click a few buttons in Excel, don’t you?

Yeah, right…

Well, we all hate data cleaning, but if we get organised and learn a few tricks there are ways to fast-track it and get it done in a fraction of the time.

In fact, there are just 5 steps to getting your data clean and analysis-ready quickly and painlessly.

How to Clean Data: 5 Data Cleaning Steps Anybody Can Follow

Data Cleaning Step 1: Plan, Plan, Plan – Then Plan Some More…

I’ve been involved in many studies at the data collection stage without being consulted in the planning of the study. In every single case, it turned out that the study had not been thought through properly, there were big problems with the study and we had to go back to the beginning to plan it all again. I guess they all thought that there’s no need to involve a data analyst until you actually have some data.

Oh, how wrong can they be…

You see, data analysts and statisticians start thinking about the end game right at the beginning, and that includes deciding which statistical tests will be used on the data, even before the data have been collected. They’ll consider which variables are important, which interactions should be interrogated and which statistical package will be used for the analysis.

Each statistical package has its own particular quirks, and if you know what they are you can arrange your data accordingly right from the beginning.

This is what I mean by planning. It’s not just about collecting your data. It’s about collecting your data to the necessary degree of precision, in the correct format, and making sure that it is fit-for-purpose and capable of answering your research questions.

Ask yourself if you’re sure that the data you plan to collect will fit into the nice, neat boxes and categories you’ve designed. If you’re not absolutely sure, then do a pilot study first – go out and collect some data. The data you collect might surprise you, and it might change the nature of your study.

So then you go back to the drawing board and plan some more. Keep doing it until you KNOW how your study will progress.

A wise man once said that ‘a well formed question contains its own answer’. As far as I’m concerned, if you’ve planned your study well enough you’ll already know what the outcome is likely to be.

Well, maybe, but at least there will be few surprises…

Data Cleaning Step 2: Learning How To Collect Data is Just as Important as Learning How to Clean Data!

Making sure that your data is as clean as it can be even before you start data cleaning is the best and easiest way to hold on to your sanity.

Of course, if your data is inherited from someone else there may be little you can do about it, but if you’re collecting your own data, deciding on a few standards before you get started will save a lot of pain later.

For example, if your dataset is small enough to fit on a single Excel worksheet, then enter your data in a single worksheet. If you enter it across multiple worksheets and then need to sort your data you’re likely to make mistakes that can’t easily be corrected. Oops – you’ve just screwed up your dataset and need to start again.

Most statistics and analysis packages require that your data is arranged so that each column is a single variable (Height, Weight, Inside Leg Measurement, etc.) and each row corresponds to a single sample (patient, test-tube, customer, etc.), so get into the habit of formatting your data like this. Oh yes, and row 1 is reserved for your variable name. Not 2, 3 or 4. Just 1.

I also highly recommend that you create a unique ID column in column A, numbered in consecutive integers. You’re going to need to sort your data by different columns and you’ll need a way to restore the original order, and this is the best and easiest way to do it.

Also, did you know that Excel has a built in Data Entry Form that you can use to enter your data quickly and easily? It’s probably Excel’s best kept secret – hardly anybody knows about it, but it’s a really useful feature.

Data Cleaning Step 3: The Moment You've Been Waiting For - How to Clean Data!

Data cleaning in Excel isn’t really about data cleaning. It’s about being organised. Anybody can clean data, but not everybody can clean data quickly and efficiently. Organising your Excel workbook before you get started with your data collection or data entry is a skill that is worth learning.

You should create a worksheet for your Raw Data, another for Cleaning In Progress, a third worksheet for Cleaned Data and one for Data For Analysis.

When you version control your data like this, you can view your dataset in various stages of preparation, and – if done correctly – when you discover an error in later worksheets you can follow the trail back to the point at which the error was introduced. I guarantee you’ll feel a flush of satisfaction when that happens!

Other sheets that you’ll need in your workbook include a Codes sheet, a Notes sheet, Spare Sheets 1, 2, 3, etc., where you’ll clean your data in independent columns. Well, you don’t expect to do your data cleaning in the same worksheet where your data is stored do you? Does the Find & Replace feature work only on the column you’ve selected or does it apply to the whole worksheet? Are you sure? Really REALLY sure?

And what about the Invisible Man? I really hate this guy. He lurks around in your dataset looking smug and self-satisfied. Well, at least, that what he would look like if you could see him! Trailing and leading spaces can wreak havoc on your analyses, so finding and removing them is a critical skill to have. Fortunately, Excel has a few formulae – including TRIM, CLEAN and SUBSTITUTE – that when used in combination can remove trailing and leading spaces and all non-printing characters from your entire dataset in as little as 60 seconds. Yup, you read that right – 60 seconds, irrespective of the size of your dataset! Learning this little trick can save weeks of data cleaning all on its own (for more details, check out the course at the bottom of this post!).

Data Cleaning: "Data Scientists spend 80% of their time cleaning data and the other 20% complaining about it" @eelrekab @chi2innovations #data #gooddata #datascience #dataanalysis

Excel also has a plethora of other data cleaning tools that will help streamline the whole process, such as Remove Duplicates, Find & Replace, tools for standarding the case of your text data, such as LOWER, UPPER and PROPER.

Here’s an example of how to combine these formulae for powerful effect. Let’s say we have some data that contains errant spaces, non-printing characters and is all lower case. We could very easily transform these data into perfectly clean proper case data (capitalisation of the first letter in each word).

Combining the TRIM, CLEAN and PROPER formulae would help to clean data like this very quickly, like this:

All leading and trailing spaces are gone, leaving only a single space between each word, all non-printing characters are gone and each word is capitalised on only the first letter. Even the continuous data has been cleaned without being affected by the PROPER part of the formula.

Perfect!

Practical Data Cleaning

Practical Data Cleaning explains the 19 most important tips about data cleaning to get your data analysis-ready in double quick time.

Discover how to clean your data quickly and effectively. Get this book, TODAY!

Data Cleaning Step 4: Coding, Calculating & Converting Your Data

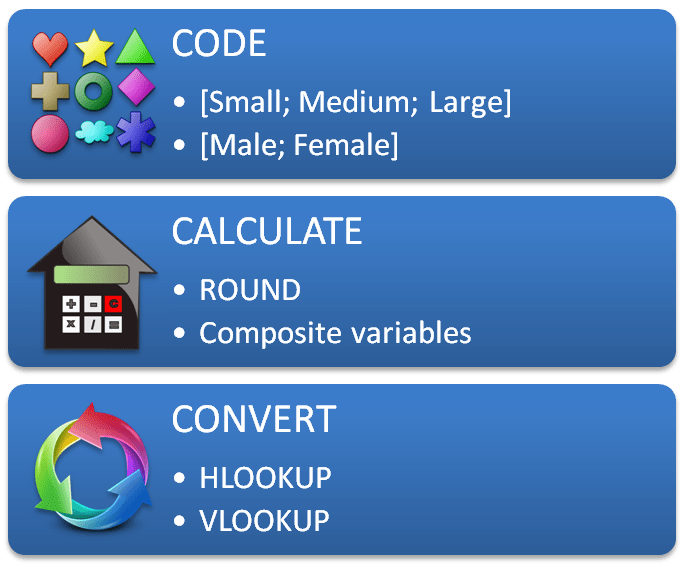

The 3 Cs - Code, Calculate and Convert - help you to get your dataset analysis-ready.

When you're collecting your data you are likely to have entered their values as codes, such as [Small; Medium; Large] or [1; 2; 3]. You may know them as categories, but they are actually codes that mean something else - the size Small has a specific definition; height, width and so on.

It's important to make notes on what these codes mean - so you should enter the codes in a Codes worksheet and an explanation in a Notes worksheet!

Some data are collected and some are not. Height is collected, and so is Weight, but Body Mass Index is calculated (from Height and Weight). Sometimes, data that are collected needs to be rounded, and sometimes they should be placed into categories. For example, Weight may be measured in kilograms or in pounds and rounded to the nearest 1, 2, or 3 decimal places (using ROUND, ROUNDUP or ROUNDDOWN). Alternatively they could be categorised as Underweight, Normal, Overweight or Obese. It all depends on what you plan to do with your data and how you wish to analyse them, but you will often need to perform calculations on them.

It’s quite a good idea to create a new worksheet, titled Calculated Data, and this is where you will create composite variables (like Body Mass Index), convert numerical variables to categories, and round your data.

You may also have stored your categorical variables as text, such as Small, Medium, Large. Will your favourite stats program allow the use of text variables, or will you have to convert them to integers? Here’s where you’ll make these conversions, and learning how to use VLOOKUP and HLOOKUP will help make this process as painless as possible.

If you check out the help file for VLOOKUP on Microsoft’s website it’ll show you how to look up the price of Brake Rotors in a Motor Supplies database. It’s not at all obvious that you can use VLOOKUP to convert data from one type to another.

For example, if you have a column of data for which the entries are 'Grade 1', 'Grade 2' and 'Grade 3', and you need these to be transformed into integers 1, 2 and 3, knowing how to use VLOOKUP is a very handy skill to have.

Data Cleaning Step 5: Data Integrity

The last of these 5 Data Cleaning steps is mantaining data integrity.

Real life follows rules, and so must your data. There have been many times when I’ve discovered patients in a dataset that are over 300 years old or who have an age less than zero. Calculating descriptive statistics can help you find values in your data that don’t break any Excel rules, but are incorrect nonetheless.

For numerical entries, learn how to use the formulae COUNT, MIN, MAX, and AVERAGE. For text entries, using COUNTIF can tell you how many entries of Small, Medium or Large you have in your variable. For empty cells, COUNTBLANK is a very useful formula to use.

If you really want to impress your boss, you can use Excel’s QUARTILE function to identify statistical outliers in your numerical variables. Deciding what to do with these can make or break your analysis, so it pays to find them at an early stage.

Here’s an example of how to build a table of descriptive statistics that lets you see at-a-glance what problems you might have in your data:

By looking only at the descriptive stats you can see that there are negative numbers in both Age and Height variables, which are clear errors. The maximum Ages and Heights are also likely to be incorrect (assuming the data are for humans rather than tortoises), there are entries of zero in both variables and there are missing datapoints.

There’s plenty of work to be done here, but by using these descriptive statistics you can very quickly identify the most obvious of these errors before you get started with your analysis.

Your Next Data Cleaning Step

You're not passionate about data cleaning, are you?

I mean, you didn't leave college or University saying "now that I'm free to forge my own path, I'm going to be a ... a data cleaner!"

That would be ridiculous and I'm not buying it!

No, you're here because you need to clean your data, and you want to know what the next steps are. Well, we're here to help.

I hope you've found this blog post useful, and to help you take your career to the next level we've created a series of video courses dedicated to data collection, data cleaning and data preparation to get your data analysis-ready in double quick time.

You can get the video course teaching how to clean data right here:

UNIQUE VIDEO COURSE

In less than 2 hours

your data can be: